Debuggers Aren’t For Debugging

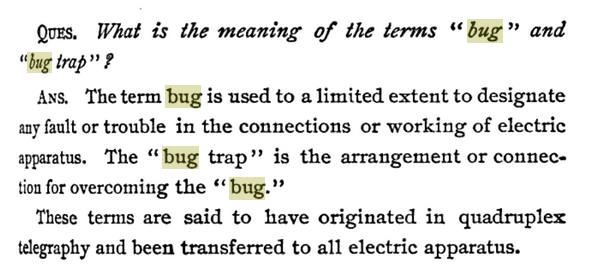

In a counterfactual past, perhaps the term “bug” was never adopted in the 1800s.

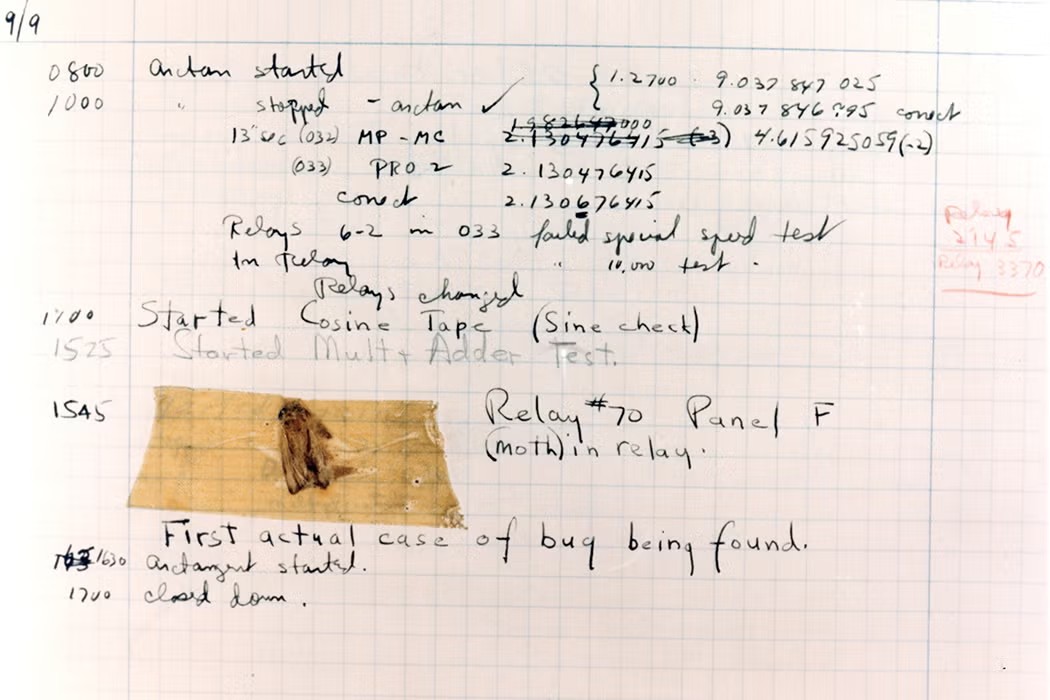

And perhaps this pesky bug was never captured and never became famous.

And in this alternate history, perhaps a different name would have been given to the tools we now call “debuggers”. Nearly any other name would have been preferable since de-bugging is something debuggers do not do. They’re fantastically helpful with debugging, but so are many other things. Debuggers mainly do two things: time dilation and state inspection. Basically, slow down and show me the bytes in a form I can understand.

Instead, maybe they would have been named after Alan Turing’s debugger, in which he connected a loudspeaker to an early computer so he could hear the unfolding of the program. I came across this in a Claude Shannon biography. Turing’s name for this was “put a pulse to the hooter”, not exactly a phrase ripe for branding a general tool.

Still, Turing’s loudspeaker was a perfect example of what debuggers really do. They don’t eliminate bugs. They show you what is going on inside the computer.

I’m not here to suggest any better names. “Debugger” is a foundational part of the lexicon. I’m just lamenting the accident of history that may have contributed to the under-use of debuggers we see today.

I’m increasingly seeing talk of AI agents doing debugging for us. AI agents are actually capable of “debugging” in the sense that they can remove bugs (yes, the eager naysayer is correct, they can introduce them too). Even today they are more capable of active debugging than a debugger. But they don’t have great facilities, currently, for time dilation and state inspection of programs. However good they are at finding bugs by looking at code semantically, or syntactically, or running it with print-debugging, they would be better with the ability to step through code, observe branches taken, and inspect values along the way.

My thesis is: both humans and agents should be using debuggers more.

# Why we still need debuggers

In 2026, the balance of effort is shifting quickly away from code production and into code review. Code review was already difficult before AI codegen was possible, and now programmers who have adopted these tools are producing 10x their previous amount of code, or more. And those programmers are likely spending even less time in debuggers than they were before (which was already very little).

Adapting to this new world is a quest. Many are saying “AI do code” and “AI also review code” is the path, and that may be. But if AI does not also perform deep state inspection, avoidable bugs and vulnerabilities will slip through.

Imagine this:

- You prompt a coding agent to implement a feature, with all due necessary guidance and constraints.

- The coding agent generates code for the feature.

- The coding agent generates tests for the feature and ensures they pass. (Swap #2 and #3 if you like TDD)

- The coding agent runs each test in a debugger, stepping line by line through relevant code, and inspects intermediate untested state.

Steps 1-3 are all possible today, but Step 4 isn’t hooked up yet in any tools I’m aware of. I think it would be a fantastic experiment. My experience with Opus 4.5 and 4.6 suggests it would be quite good at this even without further training. LLM context windows pose another big challenge for this idea. Debuggers can generate a lot of text (ever run i fu in gdb?). To be effective, we’d need to make an agent clever about token filtering in gdb/lldb/DAP/etc, the same way Claude Code uses head and tail to prevent token bloat.

Taking this even further, the agonizing task of reviewing AI-generated code could be more interactive too. The debugging runs from step 4 above could save a recording, like time traveling debuggers do. Reviewing the code can be less about understanding an alphabetically-sorted list of file differences, and more of an interactive walk through the code as it actually ran. It would be possible to scrub back and forth through the code and observe values computed and branches taken. Perhaps there’s even a creative way to diff debugger recordings, in addition to the code. Alongside a code diff like this:

- let x = y * 2;

+ let x = y * 4;Imagine seeing a visualized execution diff of the before & after debugger recordings, showing how the value differs during program execution on the two branches.

(gdb) y = 100;

- (gdb) x = 200;

+ (gdb) x = 400;The two forms of diff visualization, side by side, would offer a great way to understand incoming code changes.

This example is oversimplified to get the point across. It’s easy to imagine many patches resulting in oversized, indigestible execution diffs. Or noisy execution diffs due to nondeterminism. There are lot of reasons this would be hard to do well, but if you can imagine how nice it would be if done well, it may just be worth doing (well).

These are my thoughts about how debugger avoidance is a missed opportunity for deeper understanding, even in the absence of bugs, how debuggers can help improve coding agents, and how debuggers could play a role in coping with the review burden caused by coding agents.

Thank you for coming to my onomastic cascade of perhapses. Perhaps you stayed till the end, in which case I thank you.